I’ve used the built-in Asp.Net Membership Provider a number of times for its simplicity and straight forward API. There are a few mystical elements that can trip you up, if you don’t understand how the provisioning process works. There are two primary options:

I’ve used the built-in Asp.Net Membership Provider a number of times for its simplicity and straight forward API. There are a few mystical elements that can trip you up, if you don’t understand how the provisioning process works. There are two primary options:

Manual

I generally create the membership database before I start working on the user interface (seems logical right?). I do that by running the membership provider wizard by opening a Command Prompt, navigating to the .Net 2.0 framework folder and running the aspnet_regsql.exe command (c:\windows\Microsoft.net\framework\v2.0.50727\aspnet_regsql.exe on a 32bit OS).

That launches the setup wizard where you can choose how you want the database configured in your SQL Server instance:

- use an existing database such as the application database or

- a stand-alone membership database if you want to have multiple applications use the same membership database

The wizard then runs the necessary scripts against the selected database (creating the database if it doesn’t exist) and populates it with the tables, views, and stored procedures that the API uses to manage the membership details.

Auto-magical

In case you hadn’t guessed, this is method enshrouded by mystery (not really, but it can seem like it). If you don’t choose to manually create your membership database (via the wizard), then the default option as defined in the machine.config file is to use a SQL Express database which ends up getting created in the application’s App_Data folder in Visual Studio.

<connectionStrings>

<add name="LocalSqlServer" connectionString="data source=.\SQLEXPRESS;Integrated Security=SSPI;AttachDBFilename=|DataDirectory|aspnetdb.mdf;User Instance=true" providerName="System.Data.SqlClient"/>

</connectionStrings>

Depending on your version of Visual Studio and perhaps the method you use to initiate the creation of this database, it might not be obvious exactly where the database is or how it is configured to point to that database file. The corresponding connection string doesn’t necessarily show up in the web.config file because it is inherited from the one defined in the machine.config.

There are multiple ways you can initiate the creation of the membership database. A couple include:

- Select “ASP.NET Configuration” from the Visual Studio “Project” menu (screenshot is from VS 2010).

- Drag a login control onto the design surface and select “Administer Website” from its context menu.

Upon first use of adding a user, role, or other activity a SQL Express database (ASPNETDB.MDF) will be created including the membership provider’s tables, views, and stored procedures used to manage the membership and personalization system.

Mystery

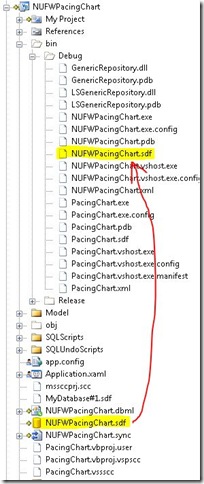

In a quick prototype project, I used the auto-magical process and added a few users. You will notice in the App_Data folder, there doesn’t appear to be an ASPNETDB.MDF, only a TeamStrength.mdf file, so where are my users stored?

Upon closer inspection with Windows Explorer, there actually is a membership database in that folder.

Mystery solved.

You may also be interested in my introduction to implementing ASP.NET Role Based Security presentation or the code samples from the presentation can be found through a link on that blog post.

Photo credit: m_bahareth / CC BY 2.0